Tech Stack

The tech stack used for the web application is as simple as Angular, Spring, Hibernate, SQL Server while everything is hosted and managed through Microsoft Azure. The users are authenticated through the Active Directory on Azure. There is a model that serves the domain of the application which has a representation both on the Database and the java code; in terms of Spring, apart from the model, there is a controller layer, a service layer implementing the business logic and a repository layer realizing the transactions with the database. The controller layer is the complete set of the endpoints that are forming the Rest API which is used as the representation state of the described backend system towards the angular on the frontend.

The problem

In any kind of tool, technology, language there are some common mistakes either because of the source’s limitations, bad interpretation of its capabilities or lack of experience. Eventually after some time, the community that uses such tools spots such items and creates guidelines for the best practices hopefully followed by the engineers to improve the quality of the code and the performance both for the team and the product. At the time we decided to go for an official version of the project that covers the whole company, we understood that we should parse the code, revisit every decision made and reveal any limitations, bad decisions, antipatterns used on the code, security issues, items that would prevent a future scaling, maintenance but also user experience mistakes. And guess what! We found tons of items to improve!

Smokes

Rest Issues.

When Roy Fielding and his colleagues created Rest, they had exactly this in mind; an architectural style that can realize client-server stateless communication over a uniform interface. That would be the already known for decades HTTP. But apart from that, the Rest came to act as a standard way of achieving such communications and the community decorated the basic approach with much more best practices and design rules; rules that we violated a lot. In our project we found many endpoints that were clearly created per use case without trying to find a common point that could lead to an

abstraction layer that serves many similar use cases through one single generic endpoint. The HTTP methods were not properly used, the response codes were not indicating the actual status of the system and the names of the endpoints were not intuitive enough so that an end-engineer can easily navigate and consume them. Finally, the body of the responses could be an error message, a text that could be presented to the front end or a Json formatted payload with data thus making it difficult to serve a single resource through the same endpoint.

User experience.

The web application had two basic categories as use cases; to serve the employees of the company and an enhanced view and capabilities for the admins. The major problem we faced here was that trying to introduce new features it was difficult to decide where the best place is to put the new functionality. There were quite a few tabs and layouts that would bring almost identical data; actually, data of same type but just a different set addressed by some filter. Sounds familiar? It is noticed that unexperienced engineers will not try to reuse modules so that a new feature can naturally sit among others preserving uniformity, but rather apply a whole new approach based on criteria that lead to quick and dirty solutions.

Additionally, there were forms with hidden optional/mandatory fields and buttons that would not let the user know whether the request was accepted or rejected. Or cases where three views with slight visual differences could serve the exact same result having as a result that the most enhanced and handy out of the three was the eventually the only one used while the rest were only there to consume human hours for no-reason maintenance.

Testing.

It is normal for an application that is not destinated for wide use to lack extensive testing. In our project, we had low coverage with only some minor unit testing. However, since we decided to fully utilize the web application within our company, we knew we had to improve our coverage. We needed complete unit testing for methods, meaning functionalities and calculation in the business logic of our application, additionally integration testing that is one level higher so that we can ensure the smooth interaction with other systems like databases and directories. A function testing suite is always a good way to confirm that a functionality’s requirements are met as agreed with the stakeholders. With those three different types of testing, we wanted to ensure that all the agreed decisions are met and will not change by accident or misunderstanding, while the engineers can quickly detect any breaks their code is introducing during development by using the above suites that altogether offer around 80% code coverage.

Java issues.

No matter what language used to build your backend system, there are a lot of best practices that can be violated depending on the complexity of the business logic, the limitations of the language, the experience of the engineers or just not following a common convention among all the modules of the backend. Spring offers a way to structure the repository of your code and provide libraries that will abstract out functionalities that solve common issues for most of the applications. But there is always room for mistakes! You must take a step back and have a new glance at the code. At that time, it would

be good to revisit your book with the latest version of Joshua Bloch’s “Effective Java” while a static code analysis is always a great option to quickly highlight some common mistakes. This is what we also did.

With the help of SonarQube we easily identified quite some items that could be improved like variables that had the wrong scope, duplicate code, stiff signatures, complex methods with many calculations, bad naming of variables, classes, or other components and many more. There was even a case where the hashCode method was not overridden even when equals were, thus breaking the contract and eventually having abnormal behavior when used in a hashMap. A bug was just revealed out of nowhere!

A difficult item though was to understand the higher picture and clearly see that some classes on the diagram were serving complex use cases mixing calculations with state of the class and violating the SOLID principles like having Single responsibility and being Open for extension but closed for modularity; the two most basic yet important principles of object-oriented languages.

Database issues.

Database mistakes are the most expensive to fix. Every resource is dependent on the data themselves but also the relationships between them. Knowledge is power and so we decided to identify them. We noticed that data could be normalized such as there are no duplicated information in the tables, the relationship are depicting the real world and making connection of the tables through logical paths, the types are the correct ones offering both the needed functionality but keeping the database footprint as low as possible so that backups are flexible, and the transactions are not propagating redundant payloads. What is the reason to use “int” for a column that will not hold more than 20 different values or having an email column defined to store more than 320 characters?

Planning

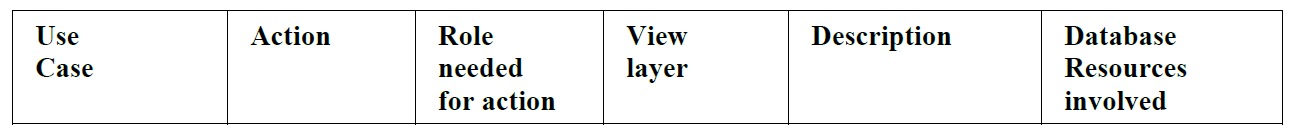

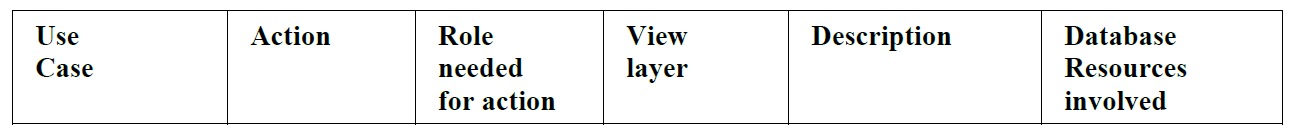

After spotting all the above items, we now had to establish a well-defined plan to address all these issues. To efficiently estimate the cost of the refactoring we first decided to write down every use case that the web application needed to serve to its users, so that we can later prioritize them and commit them to different phases based on their priority. We keep track of all the use cases in a table as simple as the one below:

Then we quickly identified obsolete behaviors or use cases that could be merged. We interacted with the end users to understand their needs and restructured the application so that it is more user friendly, easier to interact with and maintain as an engineer.

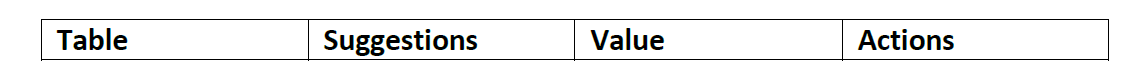

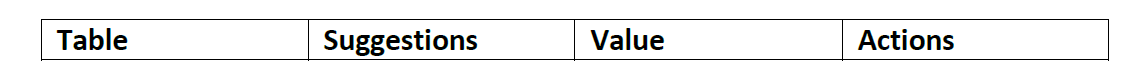

In a similar fashion we approached the database and the Rest API.

For the database we wrote down all the items for improvement per table with the cost and the value that the change will bring to the application.

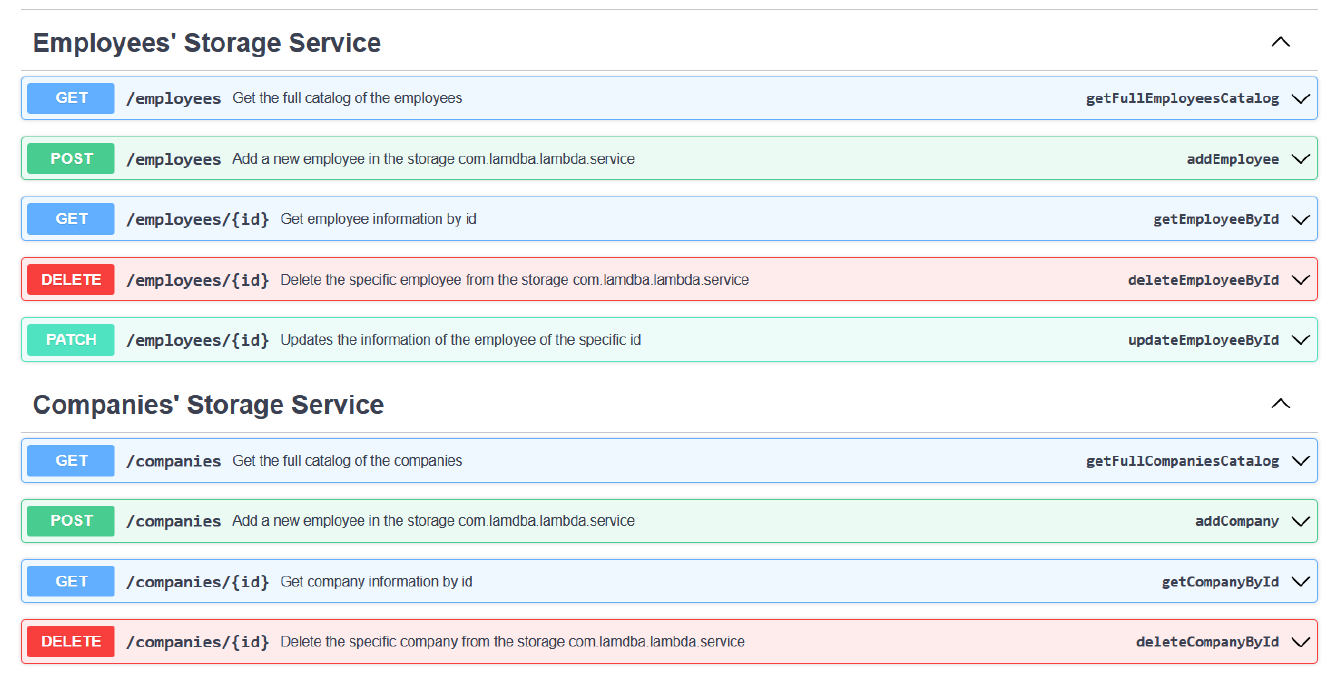

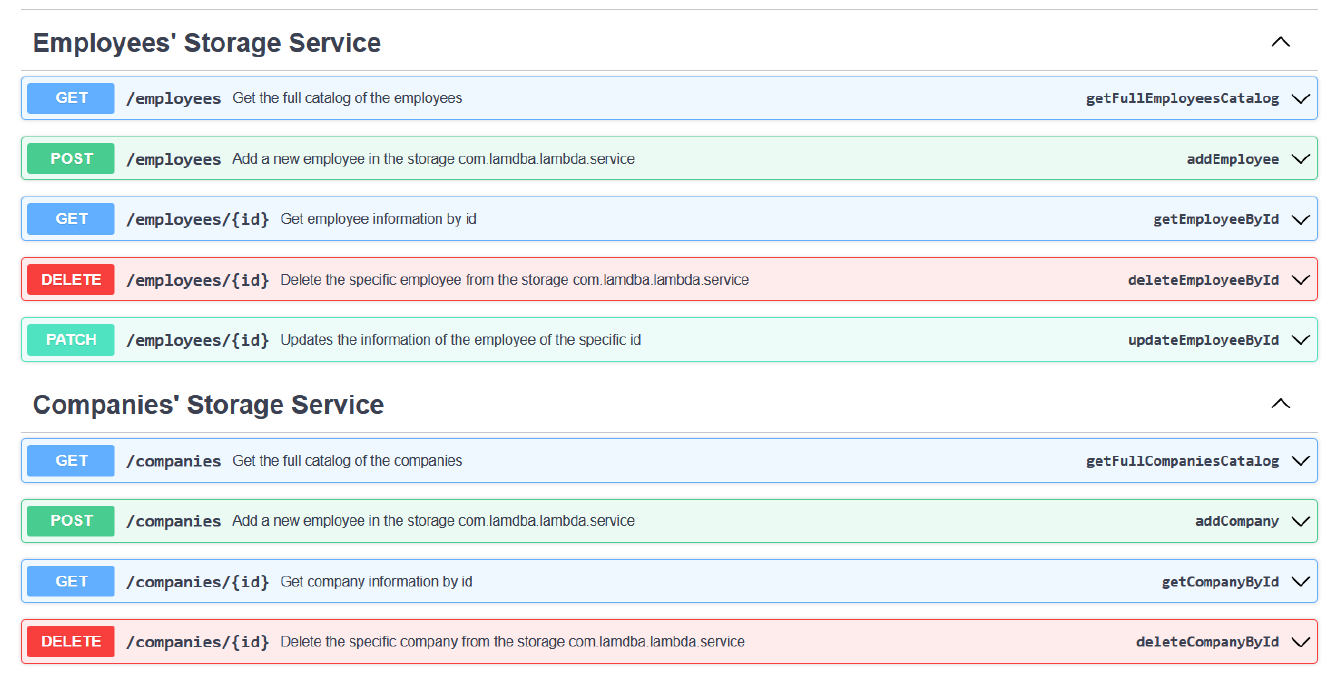

On the other hand, for the Rest API we decided to go more strictly and define a swagger specification that will be the one that can be served not only from the current frontend view layer of the application but also any other future project that might want to consume data from our backend.

In the end, the result was easy and pleasant to follow and implement:

Figure 1: Part of the Rest API swagger specification

Ways of working

At this point, it was quite evident what was the job. We only needed to separate the tasks in such a way that there were no dependencies between the work and prioritize in a way that would make the refactoring quicker with no back and forth or tons of git conflicts.

It was time to define some ways of working. We quickly agreed to introduce Agile methodologies in our project as we were already familiar with, and we knew the benefits of using it both in general but especially in such transformations. The only deviation from the Agile was that in this case we acted also as the Product Owner since we defined and created all the tickets necessary through an extensive and very careful set of meetings with the team writing the description as low level as possible and strictly defining the acceptance criteria for every of those tickets.

Finally, we continued with the normal Sprint Planning where we estimated the tickets and committed to them for specific deadlines respecting the general prioritization of the scopes.

Refactoring

Having everything written on bullets, on tables and as acceptance criteria, we could not wait to start refactoring. Usually, when refactoring even a small class, we felt like the whole project was at risk, because of bugs or behavior that was not clear. This time, the refactoring felt like a relief. The team was meeting every morning to share progress and sync when working on similar or dependent functionalities.

We started with the database items that had a big impact on the domain model but also the front-end payload. Since the relationships were logical now, we noticed that the frontend was mostly code deletions, and the needed data could be easily fetched in a logical manner from the responded payloads without the need for data merge or extra frontend calculations.

For every family of endpoints refactored, we wrote a big set of tests that thoroughly assert the necessary behavior of the Rest in terms of status codes, payload data and even response times! For every functionality touched, we wrote a new set of positive and negative use cases that left no room for ambiguity or bugs. And finally, we created a large new set of integration tests that are as close as possible to the real application scenarios, meaning that a real database is used and a real Active Directory running end-to-end scenarios that cover most of the code.

Up to this point, we treated the business layer as a white box. After moving to the second phase, having the database and the Rest API refactored and the functionality still untouched, we revisited the core code logic where we fixed all these static code analysis items and items that we agreed and accepted as design rules for better code quality. We abstracted some classes with the use of Java Generics so that code was not duplicated, and calculations were offered on a layer that was agnostic to the end engineer for a specific package through contracts based on interfaces. The code became intuitive enough just from the methods signatures! When we added Javadoc to the methods the whole layer was jewel bright and since then a simple mouse over a method gives all the necessary info in a compact and quickly accessible form.

But we did not stop there. Following the modern computer engineering recommendations, we did revisit our libraries and upgraded them to the latest versions while removing some of them that were unnecessary. We did stress tests while observing the resources consumption so that we could end up in a more efficient resources plan – memory, CPU, storage – in the different parts of the system. The results were outstanding! We concluded that we could use a cheaper azure App Service Plan and distribute the resource in a way that the application performed more efficiently!

Every new change pushed was then reviewed by the most competent engineers in the area through GitHub before proceeding in a staging environment for extensive testing and eventually released in production. A process that helped to minimize mistaken commits, ensured that the acceptance criteria were met but also naturally communicated knowledge and info between the team members and accelerating the competence build-up of the engineers.

Conclusion

Impediments.

The road towards an application level refactor is not easy. The most important aspect is for the team to manage to align on a common view on the ways of working. People feel more comfortable with different things based on their experience. One approach might seem the best choice for an engineer while the others will see this as sweeping the problem under the carpet. And the discussions are getting more complex when there are no strict design rules that mandate one or the other, but rather are more than one alternative philosophies.

Such conflicts are resolved after deep engineering discussions that highlight pros and cons for each of the approaches, but also compared to the pragmatic needs of the application. In the end though, the approach followed is a solid acquis of the team that boosts the development and the robustness of the project.

Benefits.

In general, every decision made during the process triggered discussions and deep thinking that eventually developed each engineer personally and the team. Now the engineers are more experienced. The project is newcomers friendly with clean code and easy to reuse modules. SOLID principles are respected and overall, the application future seems easily maintainable and extensible while as we speak, we already planned the scaling to cover more departments of our company!

Tradeoffs and Lessons learned.

There is no good without a cost though. During the discussions, the redesign, and the refactoring we completely paused new features. Our bug fixing and support responses were late. But we learnt some lessons in the process.

The engineering community ceaselessly revisits, enhance, adapt, and improve the guidelines and the best practices for each technology, language, and pattern. The suggestions are the product of experience, discussions, argumentation, and usually scientific research. A project that does not follow these rules is certainly going to last shorter while the engineers will face difficulties that will need more complex workarounds every time as the time goes by until the cost of a change will be that high or the bugs that much, that the introduction of this change will be not worth. The sooner a team detects that their project might need some redesign/refactoring the easier the process. Don’t hesitate to highlight the problems of your project that keep back the future of the application. A repository that is according to best practices, modern principles, based on patterns and following design rules feels like home. Engineers are happy to develop and feel free to innovate and propose improvements and new features while ensuring that this will be a smooth process without unexpected limitations.